I recently set out to enable VMware ESX(i) 4 Round Robin load balancing with EMC Celerra (CLARiiON) fibre channel storage. Before I get to the details of how I did it, let me preface this discussion with a bit about how I interpret Celerra storage architecture.

The Celerra is built on CLARiiON fibre channel storage and as such, it leverages the benefits and successes CLARiiON has built over the years. I believe most CLARiiON’s are, by default, active/passive arrays from VMware’s perspective. Maybe more accurately stated, all controllers are active, however, each controller has sole ownership of a LUN or set of LUNs. If a host wants access to a LUN, it is preferable to go through the owning controller (the preferred path). Attempts to access a LUN through any other controller than the owning controller will result in a “Trespass” in EMC speak. A Trespass is shift in LUN ownership from one controller to another in order to service an I/O request from a fabric host. When I first saw Trespasses in Navisphere, I was alarmed. I soon learned that they aren’t all that bad in moderation. EMC reports that a Trespass occurs EXTREMELY quickly and in almost all cases will not cause problems. However, as with any array which adopts the LUN ownership model, stacking up enough I/O requests which force a race condition between controllers for LUN access, will cause a condition known as thrashing. Thrashing causes storage latency and queuing as controllers play tug of war for LUN access. This is why it is important for ESX hosts, which share LUN access, to consistently access LUNs via the same controller path.

As I said, the LUN ownership model above is the “out-of-box” configuration for the Celerra, also known as Failover Mode 1 in EMC Navisphere. The LUN path going through the owning controller will be the Active path from a VMware perspective. Other paths will be Standby. This is true for both MRU and Fixed path selection policies. What I needed to know was how to enable Round Robin path selection in VMware. Choosing Round Robin in the vSphere Client is easy enough, however, there’s more to it than that because the Celerra is still operating in Failover Mode 1 where I/O can only go through the owning controller.

So the first step in this process is to read the CLARiiON/VMware Applied Technology Guide which says I need to change the Failover Mode of the Celerra from 1 to 4 using Navisphere (FLARE release 28 version 04.28.000.5.704 or later may be required). A value of 4 tells the CLARiiON to switch to the ALUA (Asymmetric Logical Unit Access or Active/Active) mode. In this mode, the controller/LUN ownership model still exists, however, instead of transferring ownership of the LUN to the other controller with a Trespass, LUN access is allowed through the non-owning controller. The I/O is passed by the non-owning controller to the owning controller via the backplane and then to the LUN. In this configuration, both controllers are Active and can be used to access a LUN without causing ownership contention or thrashing. It’s worth mentioning right now that although both controllers are active, the Celerra will report to ESX the owning controller as the optimal path, and the non-owning controller as the non-optimal path. This information will be key a little later on. Each ESX host needs to be configured for Failover Mode 4 in Navisphere. The easiest way to do this is to run the Failover Setup Wizard. Repeat the process for each ESX host. One problem I ran into here is that after making the configuration change, each host and HBA still showed a Failover Mode of 1 in the Navisphere GUI. It was as if the Failover Setup Wizard steps were not persisting. I failed to accept this so I installed the Navisphere CLI and verified each host with the following command:

naviseccli -h <SPA_IP_ADDRESS> port -list –all

Output showed that Failover Mode 4 was configured:

Information about each HBA:

HBA UID: 20:00:00:00:C9:8F:C8:C4:10:00:00:00:C9:8F:C8:C4

Server Name: lando.boche.mcse

Server IP Address: 192.168.110.5

HBA Model Description:

HBA Vendor Description: VMware ESX 4.0.0

HBA Device Driver Name:

Information about each port of this HBA:�

SP Name: SP A

SP Port ID: 2

HBA Devicename: naa.50060160c4602f4a50060160c4602f4a

Trusted: NO

Logged In: YES

Source ID: 66560

Defined: YES

Initiator Type: 3

StorageGroup Name: DL385_G2

ArrayCommPath: 1

Failover mode: 4

Unit serial number: Array

Unfortunately, the CLARiiON/VMware Applied Technology Guide didn’t give me the remaining information I needed to actually get ALUA and Round Robin working. So I turned to social networking and my circle of VMware and EMC storage experts on Twitter. They put me on to the fact that I needed to configure SATP for VMW_SATP_ALUA_CX, something I wasn’t familiar with yet.

So the next step is a multistep procedure to configure the Pluggable Storage Architecture on the ESX hosts. More specifically, SATP (Storage Array Type Plugin) and the PSP (Path Selection Plugin), in that order. Duncan Epping provides a good foundation for PSA which can be learned here.

Configuring the SATP tells the PSA what type of array we’re using, and more accurately, what failover mode the array is running. In this case, I needed to configure the SATP for each LUN to VMW_SATP_ALUA_CX which is the EMC CLARiiON (CX series) running in ALUA mode (active/active failover mode 4). The command to do this must be issued on each ESX host in the cluster for each active/active LUN and is as follows:

#set SATP

esxcli nmp satp setconfig –config VMW_SATP_ALUA_CX –device naa.50060160c4602f4a50060160c4602f4a

esxcli nmp satp setconfig –config VMW_SATP_ALUA_CX –device naa.60060160ec242700be1a7ec7a208df11

esxcli nmp satp setconfig –config VMW_SATP_ALUA_CX –device naa.60060160ec242700bf1a7ec7a208df11

esxcli nmp satp setconfig –config VMW_SATP_ALUA_CX –device naa.60060160ec2427001cac9740a308df11

esxcli nmp satp setconfig –config VMW_SATP_ALUA_CX –device naa.60060160ec2427001dac9740a308df11

The devices you see above can be found in the vSphere Client when looking at the HBA devices discovered. You can also find devices with the following command on the ESX Service Console:

esxcli nmp device list

I found that changing the SATP requires a host reboot for the change to take effect (thank you Scott Lowe). After the host is rebooted, the same command used above should reflect that the SATP has been set correctly:

esxcli nmp device list

Results in:

naa.60060160ec2427001dac9740a308df11

Device Display Name: DGC Fibre Channel Disk (naa.60060160ec2427001dac9740a308df11)

Storage Array Type: VMW_SATP_ALUA_CX

Storage Array Type Device Config: {navireg=on, ipfilter=on}{implicit_support=on;explicit_ow=on;alua_followover=on;{TPG_id=1,TPG_state=ANO}{TPG_id=2,TPG_state=AO}}

Path Selection Policy: VMW_PSP_FIXED

Path Selection Policy Device Config: {policy=rr,iops=1000,bytes=10485760,useANO=0;lastPat=0,numBytesPending=0}

Working Paths: vmhba1:C0:T0:L61

Once the SATP is set, it is time to configure the PSP for each LUN to Round Robin. You can do this via the vSphere Client, or you can issue the commands at the Service Console:

#set PSP per device

esxcli nmp psp setconfig –config VMW_PSP_RR –device naa.60060160ec242700be1a7ec7a208df11

esxcli nmp psp setconfig –config VMW_PSP_RR –device naa.60060160ec242700bf1a7ec7a208df11

esxcli nmp psp setconfig –config VMW_PSP_RR –device naa.60060160ec2427001cac9740a308df11

esxcli nmp psp setconfig –config VMW_PSP_RR –device naa.60060160ec2427001dac9740a308df11

#set PSP for device

esxcli nmp device setpolicy –psp VMW_PSP_RR –device naa.50060160c4602f4a50060160c4602f4a

esxcli nmp device setpolicy –psp VMW_PSP_RR –device naa.60060160ec242700be1a7ec7a208df11

esxcli nmp device setpolicy –psp VMW_PSP_RR –device naa.60060160ec242700bf1a7ec7a208df11

esxcli nmp device setpolicy –psp VMW_PSP_RR –device naa.60060160ec2427001cac9740a308df11

esxcli nmp device setpolicy –psp VMW_PSP_RR –device naa.60060160ec2427001dac9740a308df11

Once again, running the command:

esxcli nmp device list

Now results in:

naa.60060160ec2427001dac9740a308df11

Device Display Name: DGC Fibre Channel Disk (naa.60060160ec2427001dac9740a308df11)

Storage Array Type: VMW_SATP_ALUA_CX

Storage Array Type Device Config: {navireg=on, ipfilter=on}{implicit_support=on;explicit_ow=on;alua_followover=on;{TPG_id=1,TPG_state=ANO}{TPG_id=2,TPG_state=AO}}

Path Selection Policy: VMW_PSP_RR

Path Selection Policy Device Config: {policy=rr,iops=1000,bytes=10485760,useANO=0;lastPat=0,numBytesPending=0}

Working Paths: vmhba1:C0:T0:L61

Notice the Path Selection Policy has now changed to Round Robin.

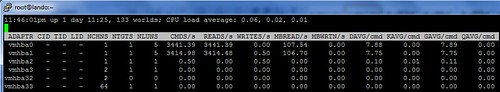

I’m good to go, right? Wrong. I struggled with this last bit for a while. Using ESXTOP and IOMETER, I could see that I/O was still only going down one path instead of two. Then I remembered something Duncan Epping had said to me in an earlier conversation a few days ago. He mentioned something about the array reporting optimal and non-optimal paths to the PSA. I printed out a copy of the Storage Path and Storage Plugin Management with esxcli document from VMware and took it to lunch with me. The answer was buried on page 88. The nmp roundrobin setting useANO is configured by default to 0 which means unoptimized paths reported by the array will not be included in Round Robin path selection unless optimized paths become unavailable. Remember I said early on that unoptimized and optimized paths reported by the array would be a key piece of information. We can see this in action by looking at the device list above. The very last line shows working paths, and only one path is listed for Round Robin use – the optimized path reported by the array. The fix here is to issue the following command, again on each host for all LUNs in the configuration:

#use non-optimal paths for Round Robin

esxcli nmp roundrobin setconfig –useANO 1 –device naa.50060160c4602f4a50060160c4602f4a

esxcli nmp roundrobin setconfig –useANO 1 –device naa.60060160ec242700be1a7ec7a208df11

esxcli nmp roundrobin setconfig –useANO 1 –device naa.60060160ec242700bf1a7ec7a208df11

esxcli nmp roundrobin setconfig –useANO 1 –device naa.60060160ec2427001cac9740a308df11

esxcli nmp roundrobin setconfig –useANO 1 –device naa.60060160ec2427001dac9740a308df11

Once again, running the command:

esxcli nmp device list

Now results in:

naa.60060160ec2427001dac9740a308df11

Device Display Name: DGC Fibre Channel Disk (naa.60060160ec2427001dac9740a308df11)

Storage Array Type: VMW_SATP_ALUA_CX

Storage Array Type Device Config: {navireg=on, ipfilter=on}{implicit_support=on;explicit_support=on;explicit_allow=on;alua_followover=on;{TPG_id=1,TPG_state=ANO}

TPG_id=2,TPG_state=AO}}

Path Selection Policy: VMW_PSP_RR

Path Selection Policy Device Config: {policy=rr,iops=1000,bytes=10485760,useANO=1;lastPathIndex=1: NumIOsPending=0,numBytesPending=0}

Working Paths: vmhba0:C0:T0:L61, vmhba1:C0:T0:L61

Notice the change in useANO which now reflects a value of 1. In addition, I now have two Working Paths – an optimized path and an unoptimized path.

I fired up ESXTOP and IOMETER which now showed a flurry of I/O traversing both paths. I kid you not, it was a Clark Griswold moment when all the Christmas lights on the house finally worked.

So it took a while to figure this out but with some reading and the help of experts, I finally got it, and I was extremely jazzed. What would have helped was if VMware’s PSA was more plug and play with various array types. For instance, why can’t PSA recognize ALUA on the CLARiiON and automatically configure SATP for VMW_SATP_ALUA_CX? Why is a reboot required for an SATP change? PSA configuration in the vSphere client might have also been convenient but I recognize has diminishing returns or practical use with a large amount of hosts and/or LUNs to configure. Scripting and CLI is the way to go for consistency and automation reasons or how about PSA configuration via Host Profiles?

I felt a little betrayed and confused by the Navisphere GUI reflecting Failover Mode 1 after several attempts to change it to 4. I was looking at host connectivity status. Was I looking in the wrong place?

Lastly, end to end documentation on how to configure Round Robin would have helped a lot. EMC got me part of the way there with the CLARiiON/VMware Applied Technology Guide document, but left me hanging, making no mention of the PSA configuration needed. I’m getting that the end game for EMC multipathing today is PowerPath, which is fine – I’ll get to that, but I really wanted to do some testing with native Round Robin first, if for no other reason to establish a baseline to compare PowerPath to once I get there.

Thanks again to the people I leaned on to help me through this. It was the usual crew who can always be counted on.

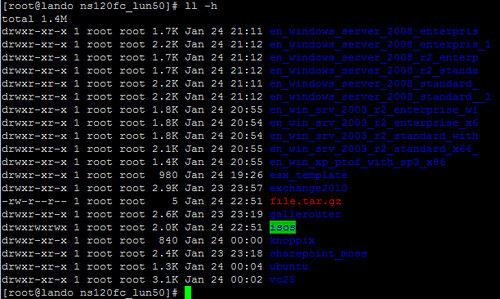

Curious about the default colors you see in a remote PuTTY session connected to the ESX Service Console? Some are obvious such as the directory listings which show up as

Curious about the default colors you see in a remote PuTTY session connected to the ESX Service Console? Some are obvious such as the directory listings which show up as

Wednesday was a very exciting day! A delivery truck dropped off an

Wednesday was a very exciting day! A delivery truck dropped off an  The Celerra arrived loaded in an EMC CX4 40u rack, nicely cabled and ready to go. Storage is comprised of three (3) 4GB DAE shelves and 45 146GB 15K RPM 4GB drives for about 6.5TB RAW. The list of Bundled software includes:

The Celerra arrived loaded in an EMC CX4 40u rack, nicely cabled and ready to go. Storage is comprised of three (3) 4GB DAE shelves and 45 146GB 15K RPM 4GB drives for about 6.5TB RAW. The list of Bundled software includes: