A few months ago I authored a blog post titled Tech Support mode Warnings. It dealt with the yellow balloon warnings attached to a host object in vCenter when Local Tech Support Mode was enabled (as well as Remote Tech Support via SSH).

Without surprise, the warnings are back in vSphere 5, albeit with the warning messages slightly changed.

Configuration Issues

ESXi Shell for the host has been enabled

SSH for the host has been enabled

In the previous blog post, I referenced VMware’s KB article which stated there was no way to hide the messages while the offending configuration was in place. That may have been the official stance but it certainly wasn’t the case from a technical standpoint as there are a few workarounds to suppress the messages.

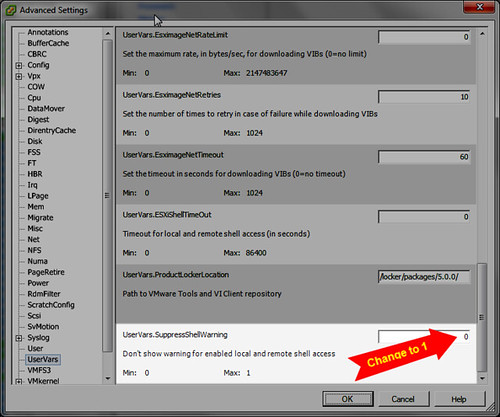

VMware has shown us a little love in vSphere 5. Both messages can be suppressed with a modification of an Advanced Setting on each host. Even better, there is no reboot of the host or recycle of a service required. In my testing, Maintenance Mode was also not required and could be performed with running VMs on the host. Although if you’re wondering if this is going to be safe to perform in a running production environment, be sure to take a step back and consider not only the immediate impact of the task, but also the longer term impact of the change because by this point you’ve already enabled or you’re thinking of enabling the Local ESXi Shell and/or remote SSH via the network. Reference your security plan or hardening guidelines before proceeding.

Following is the tweak to suppress the warnings which I found in VMware KB 2003637:

Again, this is performed for each host during the time that it is built or after it is deployed. In the figure above, the change is made via the vSphere Client, but it can also be scripted via command line with esxcfg-advcfg.

Somewhat related, in the same yellow balloon area you may also see a host warning message which states “This host currently has no management network redundancy” as shown below:

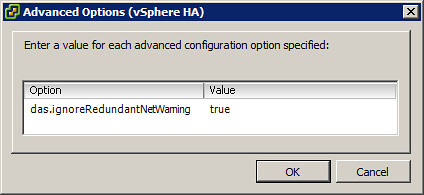

In production environment, you’ll want to resolve the issue by adding network redundancy for the Management Network. However, in a lab or test environment, a single Management Network uplink may be acceptable but nonetheless you want the warning messages to disappear. This warning is squelched by configuring an HA Advanced Option: das.ignoreRedundantNetWarning with a value of true as shown below. After that step is completed, Reconfigure for vSphere HA on the host and the warning will disappear. Reconfigure for HA step will need to be applied separately for each host with a non-redundant Management Network configuration.

Update 9/5/11: Duncan Epping also has also written on this subject back in July. Be sure to bookmark his blog, subscribe to his RSS feed, and follow him on Twitter. He is a nice guy and very approachable.

Update 10/15/12: Added section for “No Management Network Redundancy” which I should have included to begin with.

🙂

http://www.yellow-bricks.com/2011/07/21/esxi-5-suppressing-the-localremote-shell-warning/

Nice job! I am not real surprised this has happened. Our articles look similar but I honestly hadn’t seen yours. A guideline I’ve been following sometimes is that if I perform an internet search on something & find the solution in a VMware KB article or VMware communities forum post first (as opposed to someone’s blog), I’ll probably write about it. Solutions may exist on a blog somewhere and my writing will be redundant but that’s ok with me. If it helps someone else in the future or even myself, then my goal has been accomplished.

Thanks for the heads up,

Jas

I know you didn’t read mine Jason that was not the intention of me posting it here 🙂

Thanks Jason! If it wasn’t for your post I never woud have figured this out.

This shuts off the warning with a commanline one-liner

esxcfg-advcfg -s 1 /UserVars/SuppressShellWarning

Thanks Mick!

Jas

Thanks a lot!!!

That was driving me nuts.

Mine simply happened after doing an update from 4.1.0,433742

to 4.1.0,502767

Anyway all good now